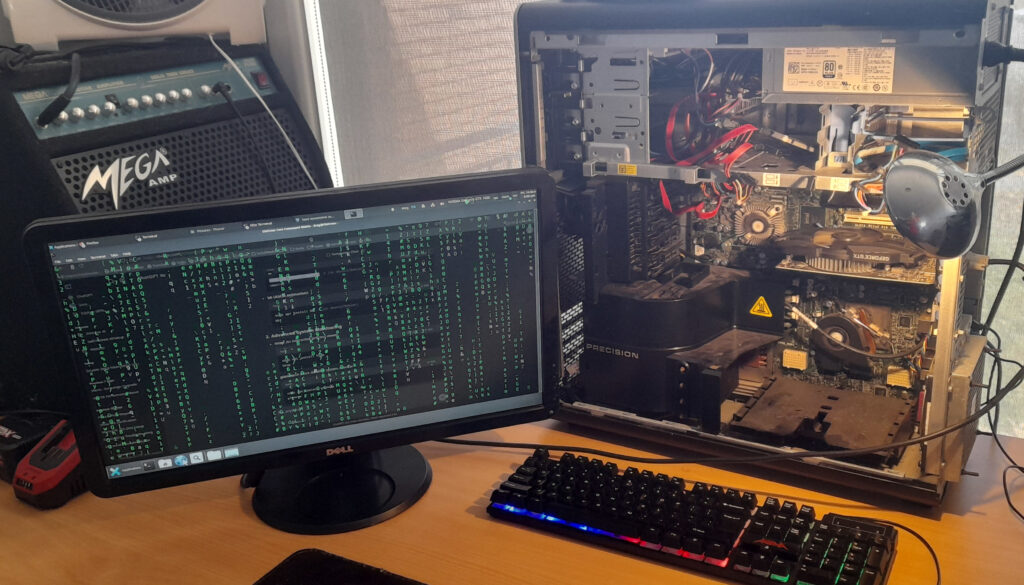

This is AI on our terms. Local Machine Model (LLM), completely isolated, and running on hardware that was never meant to be used like this. The Dell T7500 Xeon had been collecting dust, an old workstation from a different era. Dual processors, loads of instruction sets, and a 2.6GHz clock speed still holding strong. It was more than enough for what I had planned.

The first obstacle was the SAS controller. We initially tried installing Arch Linux, but the installer outright refused to boot. Switched to Ubuntu, same issue. Different SSDs, multiple USB installers, nothing worked. Digging through failed installation logs finally led to the problem: the SAS controller was interfering with disk initialization. Even after disabling it in BIOS, the system still insisted on trying to interact with nonexistent drives. SAS may have been great for enterprise HDD arrays, but with SSDs, it’s just baggage. No TRIM support, unnecessary complexity, and now actively blocking progress. The only solution was to rip it out and rewire everything to SATA. The moment the OS booted and the old Windows install confirmed the SSDs were fine.

But the display… The massive TV screen we initially used made everything unreadable, with scaling so bad we couldn’t even access the BIOS properly. After installation, we managed to fix it through Nvidia’s control panel, but that was just a temporary patch. The real fix was swapping the TV out entirely for an old 21” DVI monitor. Instantly, everything looked as it should.

Wi-Fi and Bluetooth? Gone. There’s no reason for this machine to have any wireless connectivity. wpa_supplicant, bluez, and every related package were wiped from existence. This system would exist in pure isolation, with only direct LAN access under strict firewall rules. No SSH, no open ports.

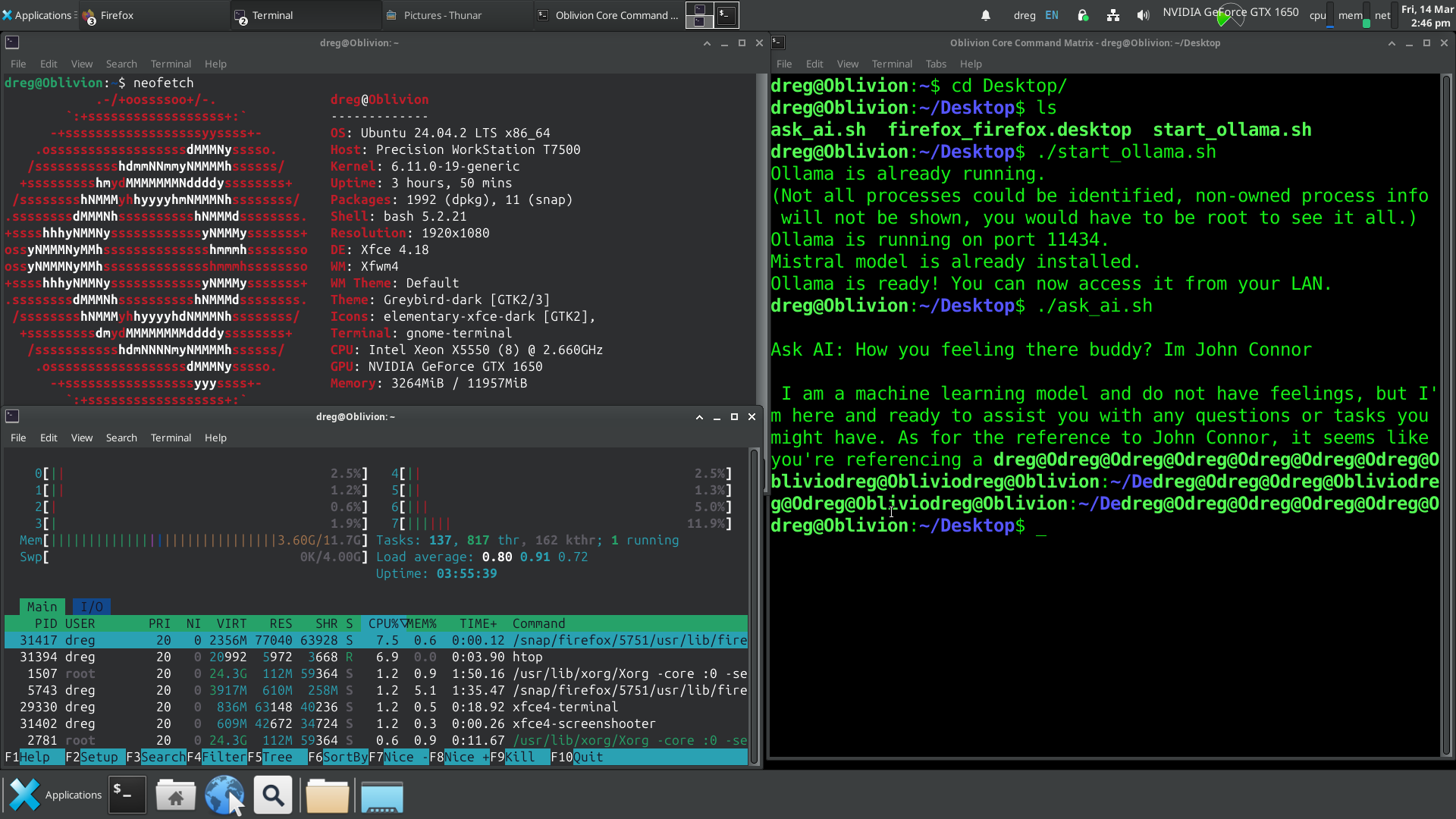

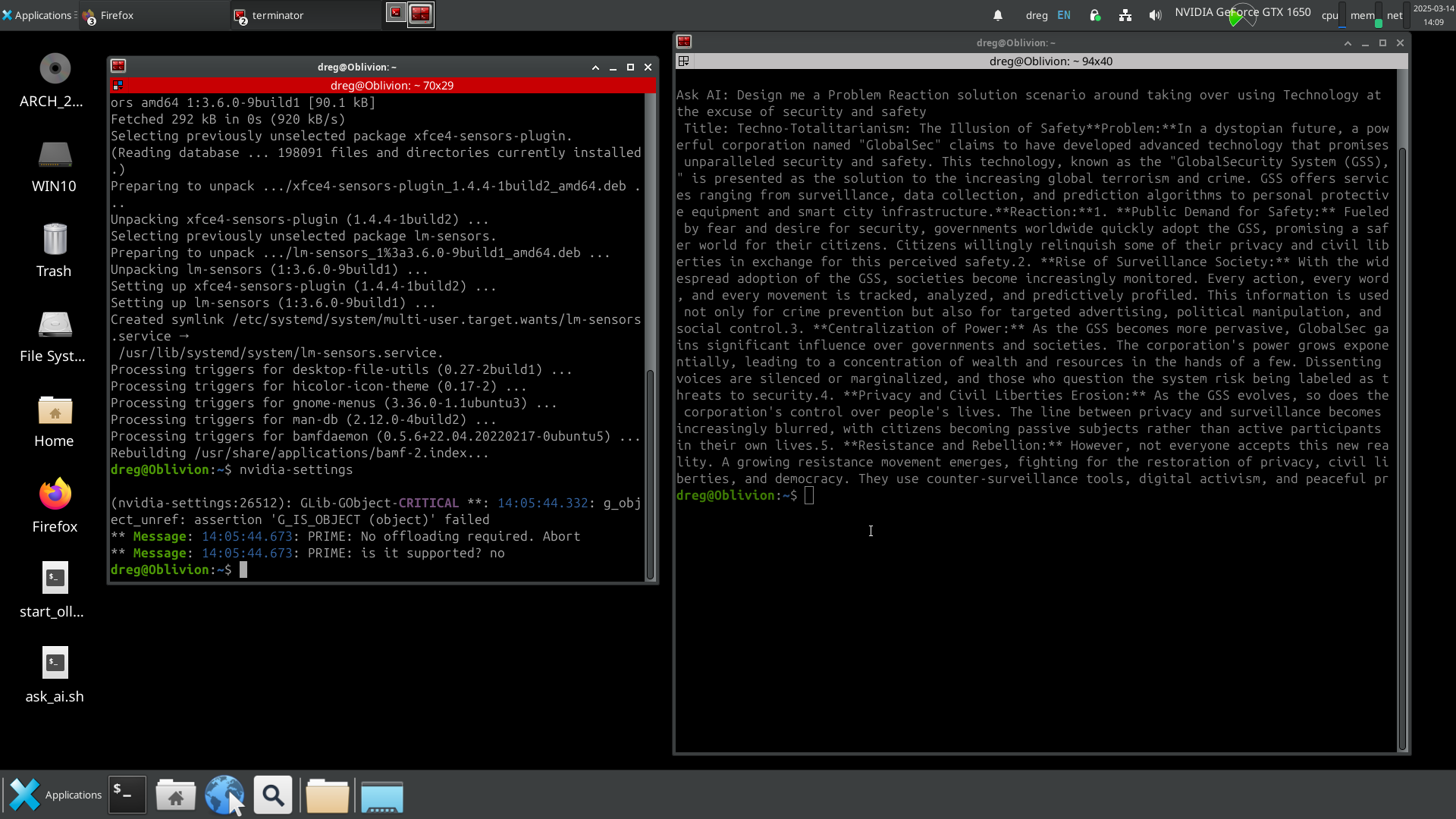

With the foundation secured, it was time to bring the AI online. We deployed Ollama, the perfect runtime for local AI models, and pulled in Mistral 7B. At only 5GB, it’s remarkable how much this model can process, entirely offline. CUDA acceleration was enabled, confirmed through nvidia-smi, ensuring that the GTX 1650 was doing its job. The model ran smoothly, the system remained stable, and BOSS AI was born – A fully operational local AI.

Does it replace ChatGPT? Not yet. (working on it in late 2025), and it’s completely private, but ChatGPT still has the advantage in speed and refinement. That said, the fact that a 5GB model can generate this level of response without ever touching the internet is a game changer.

BOSS AI – We built a fully operational AI system, completely local.